This three part series is not a warm, fuzzy post about some AI strategic theater, though there are both strategic and tactical issues included. It’s a walk down some paths regarding practical issues. It’s about some of the challenging practical realities we face once product people try learning about or making tools work in actual workflows. You may feel some of my personal scar tissue in some of these passages. This is for semi-technical product people working with or learning several AI tools and dealing with some of the practical gotcha’s in making them go. It’s also raising a hand and calling BS on the spew of feed drivel about how “all you have to do is just set all this stuff up and crap magically happens.”

What’s Ahead? failures, data, workflow ops, context and prompting, evals, governance and kill switches, costs, complacency, and more, mostly related to smaller or mid-sized projects; though some ideas apply to all. This is a rather long series, even for me. But when I study and do things, I tend to go deep. As is often the case, these posts are really based on my notes to myself in my personal wiki over time, cleaned up somewhat and posted here to share.

These tools are great and I really enjoy them, even if there are some challenging spots, which we’re about to explore. Some of this stuff feels magical! And fun if you have that attitude. It really can be just fun and satisfying to see a workflow executing well if you’re working hands on. However, issues can quickly become a major hassle. It’s rarely as it is in so much of the feed fawning I see. On the surface, it looks like product managers, all of us really, are being sold a fantasy of frictionless AI tooling. It makes me wonder if some of these folks are actually using these tools for real. My own experience, and interacting with others or via Reddit and so forth, shows a usually more challenging and meandering path. That’s ok. I get it. The Happy Path is easier to write about and slap together a LinkedIn post or YouTube or whatever. Unfortunately, it also obscures some likely realities.

Here’s my message as we drive through the messy parts. You’re ok. It’s not you. These things can still be a little sloppy. Just keep pushing through.

Time to go deeper now. Maybe not all the way into the engine, but at least under the hood. We’re going somewhat hands on wheel here for some selected use cases so we’ll have more of a clue as to what’s going on when we come across things.

As long as this particular article series is going to be, it’s still only scratching the surface.

Basics

If you’ve bothered reading past the first section to here, chances are you’re a real practitioner, working with these tools at either or both strategic and individual contributor levels. There’s so many use cases and so many perspectives you the reader may have come with that there’s no way I can possibly hit them all. What’s below is a handful of use cases by which a lot of us are using these tools. I’ll try to give you the real deal practical advice on overcoming some of the basic obstacles you’d never think were there if you read so much of the feeds we’re seeing today. “JUST CONNECT YOUR AGENTS AND YOU CAN DO ANYTHING IN A DAY! Fire your staff, you contractors, even your mom. Like, Subscribe and Follow Me for More Tips, Great Looks and Wild Fortunes.”

Yeah.

It’s rarely that simple. Often, once you get going things are fairly smooth. But just configuring workflows can be challenging on occasion. Skim through here to see if there’s any use cases that apply to what you might want to experiment with and you’ll ideally find some tips that can get you over the problematic parts.

Just to level set my perspective, I did some dev type work early in my career, but those skills are long since atrophied as I’ve been doing core product work for a long time now. Still, I’m not sure how well I’d be navigating some of the bright shiny new things without having some historical comfort at an ancient-looking and yet still contemporary Unix shell prompt. For some of you/us, it may be worthwhile to take a quick back to basics course at that level if you want to learn/play/test some of these tools yourself. As I’ve suggested before, I believe even at senior management strategic levels it can be useful to get your hands dirty such that there’s some depth of understanding, even if your day-to-day is not going to be in these spaces. In any case, I’ve vibed my own trading bots and dashboards and lots more, partially for learning and work, but also for personal use. However, many of these things run in sandboxes or tightly controlled standalone environments. Where I’ve built or consulted on production projects, there’s a wholly different standard of care. My reactions here are in response to what I perceive as way to much noise vs. signal in the AI Boosterville crowd. Sure, we can wax philosophic about Singularities, and so on. But day-to-day? These are new tools in our toolboxes to make things. That’s it. Tools can be amazing, powerful and interesting. But really, we’re either building value or we’re not. What I’m trying to do is use their power while at the same time respect their sharp edges. I realize that’s a bit pragmatic, maybe even boring. It’s also what I see as a reasonably safe means to crafting new value vs. diving headfirst into the shallow end.

Strategic Slams Into Tactical

For some, their AI strategy seems to be, DO SOMETHING WITH AI NOW so we can check the box with our board or our investors or whomever. It doesn’t matter that a large percentage of projects fail or we haven’t sorted out the values yet; just that we’re not falling behind. This is absurd. Among the many challenges of AI there’s an issue similar to what’s happened with Agile development. Agile methods were originally meant to help make better products, but a side effect was to conflate Product Management and Project Management tasks. The results in some cases has been confusion and to the point where we’re increasingly seeing some rejections of Agile. Supposedly, the rationale for this recent rejection is AI helps accelerate past things like Sprint planning. Personally? I think it’s more that this provides some air cover to try to ditch it. I think people have been chaffing a bit under Agile and looking for a way to get out of it or hybridize it without seeming to go against canon. After all, you don’t want to be seen as some Waterfall product person from the early 1990s, do you? What does this metaphor have to do with AI adoption? I think something similar happens with AI in some cases. It’s where an “AI Strategy” is just to slap AI on to something. Anything, because it’s the Flavor of the Decade. This often isn’t strategy. It’s maybe tactical use of a new tool. And this may be a good thing. Or not. Either way, it’s not necessarily a strategic differentiator.

So step one has to be WTH are you doing, or more importantly, why? The plethora of service providers and decreasing costs with competition notwithstanding, most AI implementations at any scale are going to be a non-trivial cost. And even if some costs are decreasing, others are not. Remember, cost has at least two components, rate and consumption. Whether it’s gas for your car or crypto, there’s a cost per unit, then how many units you’re consuming. The cost per unit of AI compute for tokens, inferencing, whatever, may be coming down. At the same time, if your rate of usage is bending up at a higher rate, your whole cost structure may be changing. Besides potential supply side issues with everything from chips to compute to energy, usage may be going up faster than unit costs are coming down. The factors in this equation will change quickly over time, but seem to be highly dynamic as we go through the mid to late 2020s. (See Jevons paradox for how efficiency can lead to rise, rather than fall, in total consumption.) Strategy formation isn’t just about, “Have we come up with something new and differentiated,” at some point it’s about financial viability. A technically impressive or differentiated AI initiative that burns cash indefinitely, has poor ROI, or can’t scale economically is not a real strategy. It’s a science project or a vanity exercise. Which is fine for testing out something that at least seems plausible. As long as you’re clear about what you’re doing. Just deploying AI alone is arguably not a strategy.

What might you be doing? I break this down into five general use cases:

- We make actual AI “picks and shovels” type products and services. (Very few of us.)

- We use AI for internal ops. (Likely everyone at some point.)

- We use AI for Go To Market tasks. (Likely everyone at some point.)

- Some AI feature or function is a supporting part of our core product. (Many of us over time.)

- Some AI feature is a core part of our offering.

These are the most common buckets I see. Some organizations split out AI for strategic decision support, R&D acceleration, or compliance/risk as separate categories, though in practice they often fall under internal operations or go-to-market efforts.

What are you doing that’s strategic vs tactical? You should know because there’s a difference in how you might account for your efforts. For the “table stakes” business operations aspects, it’s all maybe just more P&L items and should be evaluated accordingly. For truly strategic attempts, the cost and effort are perhaps more of a bet and should be thought of as a different kind of investment and treatment.

General Failures

If you own product, there’s a variety of elements in your business where you likely have limited control. This is where that fun saying comes into play, “It might not be your fault, but it is your problem.” There’s a variety of excessively stupid discussions going on, (my opinion anyway), about how we’ll replace product folks with engineers who can do that job now or the opposite, how we won’t need coders because anyone can just spew syntax now. That’s a post for another day, though others are already covering a lot of this. The short version is this: all of us working in digital live along some continuum of technical vs. business focus. That’s almost always been the case. There’s some exceptions. There’s some senior leaders who “own” the digital portion of a business for whom the entire product is just a line item on a spreadsheet and a few direct reports. Regardless of where you are as a product person along those lines, chances are you’re often the “one throat to choke” when things go wrong; whether strategic, KPIs, or technical, whether you really “own” that pieces or not. So it behooves you to at least ask the right questions, and make sure someone has a hand on certain aspects of things.

So. For AI tooling… It almost doesn’t matter what tool you’re using, these things are potentially risky in terms of usage failure modes right now and will likely continue to be so for the next several years. The risk spectrum runs from a bit of waste, to losing significant money, to the terrible liability and emotional costs of potentially hurting people. Even spending billions on data centers and availability seems to not always keep up with the growth in any case. There are still major and minor failures here or there, from actual customer facing product to internal platform billing consoles. There’s obviously use case differences between using AI for internal studies, learnings, analysis, etc. vs. production tooling. But right now, I’m focused on production tooling. Have backups and failovers for any mission or safety critical MLOps; just as you likely always have for other aspects of your business. People seem to be putting AI tooling into critical paths for some ops, but “vibing” DevOps is probably not the best idea. Now, if the failure is someone can’t order the latest widget from an eComm site, that sucks, but ok, fixable. On the other hand, failures with something medical? Mission or safety critical? Maybe that vibey solution just turned into someone’s introduction to liability law.

If you’ve read any of my writing, you know I’m a huge fan of irony and can be a bit sarcastic on occasion. (Just somewhat.) Well, I personally think one of the greatest ironies of our whole interwebs is that they were designed to be distributed and decentralized for robust access. And yet, we tend towards centralization in a variety of ways that trashes this. We’ve all seen how an outage at a major AWS location can trash multiple products. This, in spite of multiple availability centers and such. What about Crypto? This is supposed to be the magically decentralized answer to sovereign finance and identity! Yet, as a practical matter, something in excess of 80% of dApp traffic goes through just two gateway providers. These should all be lessons for AI. Perhaps even especially so as agentic flows pile on multiple tooling dependencies, as we also scramble to slap on some crypto payment rails. (Because why not.)

What’s the point?

Learn from the history of “failure of imagination” in terms of failure modes. We seem to be building some potentially brittle workflows that may have cascading points of failure stemming from the smallest of of weak links. (Not to mention just a wrong path chosen by an AI.)

Data First

We’re not going too deep into data analytics or science here. However, a quick mention on data quality just has to have an honorable mention.

The atomic data elements themselves matter. Taxonomy matters. Ontology matters. General data hygiene matters. (BTW, Go re-read Heather Hedden’s Accidental Taxonomist. I say re-read because you likely already should have. If not, go get it. Or find other intros to taxonomy and also ontology. There’s a reason taxonomy issues are becoming more emergent when we build with AI.) And you really should understand taxonomies. In a paper called Building Taxonomies with AI from Graphwise, co-authored by Hedden, they say, “Contrary to popular belief, Generative AI (GenAI) and large language models (LLMs) do not replace taxonomies. Instead, taxonomies provide the semantic context that anchors GenAI,

reducing hallucinations and improving result accuracy. Furthermore, the relationship is reciprocal: GenAI can be leveraged in building taxonomies.”

It all matters… and it matters well before we start messing with the bright new shiny things and trying to deliver anything of value with the fancy tools.

Before you even get to any tools, this all bears consideration. One hobby I have is woodworking. Usually fine furniture. Before I cut a thing, I’ve got some plans. Or at least a clear idea. Then I need quality boards. They need checking for dryness with a moisture meter, checks for warping, cupping, and more. If I skimp and use crappy materials? Well, sometimes there’s some skills or cheats that can be used to cover things up and move forward. More often though, if using poor inputs you not only have a sub-optimal outcome, you end up amplifying bad precursors into even worse outcomes. Also, I find it useful to practice new techniques on scrap material. As with so many things, the learning can be in the doing. It’s generally less expensive to learn on scrap and adjust rather than quality materials. Yes, yes, I’m aware that “build out loud” and “launch and learn fast” are mantras. That might be fine. It’s always going to depend on the potential consequences. Just ask, what are your risk levels and where do you want to take them.

Now let’s leave aside things like ontology and taxonomy. Or how your data source, maybe an API or something, gets you just barely almost what you need, but not quite. Uh oh, this data’s not quite right. Maybe you have to stuff a little enrichment step in your flow. Damn. This is just another “thing.” It’s not just the code effort necessarily, it’s documenting in the data dictionary, maybe updating any “as built” diagrams required for some regulatory requirement, and so on. All this just begs for some “Your Data is So Ugly… How Ugly Is It?” jokes.

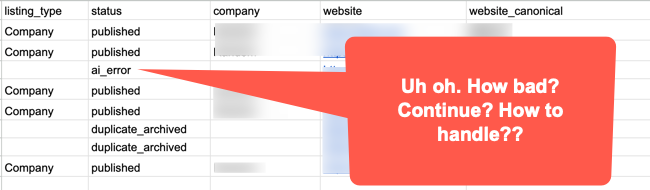

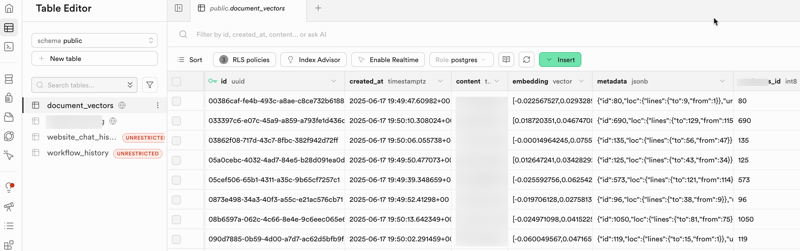

So what happens downstream with even simple data flows, or more so with multiple pipelines? Things in workflows can break. And when they do, one thing that can happen is you now have records or files that are wrong and maybe not synced with other systems. How are you going to fix just those and move on? Remember, once you go vector databases for example, clean up is probably harder. If you did a partial update to a vector database even if your source was text, you can’t always just search for simple text; unless you modeled it in there as metadata or your database allows for this. What might you have to do to back out? Did anyone set up batch numbers assigned to loads? Are there restore points? Is it easy to search for and fix the flaw? What else is running against all this right now, if anything? How big of a mess is there? How hard to clean up? All it takes is something dumb like phone numbers or zip codes with the wrong data type all of a sudden dropping zeros from the wrong places. What about conversions?

Is this a Product person’s responsibility anyway? Maybe not. You still better make sure it’s all on someone’s checklist. If your dev counterparts say, “#$# off, I got this,” that’s fine. I worked on a healthcare project once where IoT devices might report blood pressure in mmHg (the standard most clinicians expect) but sometimes in kPa (kilopascals) instead. Without explicit unit labeling and normalization, a machine will just process the raw number, potentially turning a normal reading into a critical alert, or missing a real problem entirely. That’s a case where it might be obvious to human eyes, but a machine will just process. It’s also a case where a real critical clinical workflow for sick or injured people might be impacted. How’s that vibecoding speed looking now? Would you treat your mama with that code? Your kid?

These are more of those things that are maybe not your job, but it’s still your product. Did you allocate the right resource(s) to deal with this? Sure, it’s the old story about garbage in and garbage out. But AI workflow can amplify the crap out of bad upstream data showing up later in tragic ways. Or worse, it won’t amplify right away. It’ll be hidden. Until it’s not. More work needs to be done upfront to get data pristine clean. It’s can be messy work. Someone needs to be assigned to it early on. How does this happen? Or why more with AI? Think about simple compounding effects. In multi-step agentic flows, one agent’s bad output becomes the next agent’s input. Errors cascade and get “confidently” baked into downstream decisions. And even worse, unlike obvious crashes in old systems, AI can produce plausible-looking but wrong results that could be harmful. An old-fashioned simple rule might reject bad data, where an AI might try to make sense of it somehow, even if it has user or system prompts with instructions for some cases. The cleaner you are to start, the better off you’ll ideally be. That’s obviously always been true, but more so now.

Fire, Aim, Ready, Aim

If your agentic workflow uses five different tools with different usage caps, time windows, and rate limits, what happens when one stage runs faster than the others can handle? Does it win? No. No it does not win. It can create retries, dropped work, or cascading failures across the workflow. This is not quite the classic kind of race condition from concurrent computing. It’s closer to a throughput mismatch or dependency-coordination problem in a distributed workflow.

The lesson here isn’t just about rate throttles for agents or tools. It’s about any form of dependency. Are your workflows robust enough to deal with it? Or if you’re putting more faith in the reasoning capability of an agent, are it’s skills up to the task of handling delayed or stopped processes? What might it do. What will it do? Tune in next time for the exciting conclusion of… WTF did your agent just do. Or not do. Who does the deals in your shop? Is it tech leadership? Product? Strategy? Someone has P&L here.

Let’s add in one more of today’s realities. Regardless of your Service Level Agreements (SLAs), your AI feed could fail these days. Even the top providers, (maybe especially the top providers), are sometimes victims of their own success. The compute availability for the entire industry may be outpacing supply. Some who can afford it are apparently actually burning base foundational models right on to chips. That has or will have its own issues. It’s also not something most of us are going to do anytime soon. Though who knows. if I predict it won’t be soon, I could be proven wrong next week when someone starts selling them on eBay! Possibly with a whole Anthropic based model since that code base is supposedly by accident out there in the wild now, in spite of attempts to take it down. (Though take-downs may be challenging as if as they claim a lot of their own code is written by AI, it might not even be copyrightable. We’ll see. Either way, more rather thick irony.)

Anyway, if your AI workflow is a critical path for your core product, you have some serious challenges. If it’s “just” one aspect, you should have solid failure modes.

Now here’s some reality we’re not used to. Many futurist bobbleheads talk about things like a “post scarcity” society, etc. Well, that’s mostly crap. Or rather, OK, maybe it’s truer than it’s been throughout human history given our worldwide industrial capabilities. But it’s not even across the board. (I’ll leave out details of caveats I’ve made in the past about brittleness and disruption. Suffice it to say, no one espoused the wonders of abundance during things like pandemics, wars or accidental disruptions in various single or low number of sources supply chains.) At least as of 1Q2026, every data center is battling for position to get a limited production run of Nvidia chips. As a practical matter, one company, ASML makes the high end gear to manufacture such things. Yes, there’s other chip fabs, and the world is building more. But right now, there’s very real physical limits. Even leaving aside data center build pressure and energy requirements, this is one industry category that might have a “how are we going to keep the lights on” problem. We all know what the answer will be regarding access, right? What it has to be? Pricing. We’ll see how things shape up, but for everyone that’s gotten used to cloud pricing coming down over time, we may be in a little bit of a different period for awhile. The point is, watch out for this and if it does come to pass, even just for a time, some ROI calculations might look very different. Edit: Even as I was just finishing up this post, I found that Anthropic has just raised Claude usage prices on April 4th.

Wrapping Up for Now

And these are the first big points. Before we even get to prompts, agents, or whatever the latest shiny workflow stack is, we’re already dealing with strategy confusion, bad data, brittle dependencies, and failure modes that most demos politely ignore. In Part 2, I want to go from those broad realities into the actual tooling layer: workflows, exceptions, observability, skills, and what “context” really means in practice.

Part 2: Practical Tactical AI Tool Challenges: Workflow Reality, Context, and Prompting