For all the product tools we have, from customer profiles to journey maps, there’s been little use of prediction markets to inform decisions. Unlike AI-driven predictive analytics, prediction markets remain rare in product work. With generative AI pushing teams toward faster experimentation and shipping, they may be worth another look. I became interested in this while playing with a few markets myself and wondering how they might apply to product work.

Should We Test These Tools More?

We all want to know what is going to happen next. Or more precisely, what we can do to improve the odds of our preferred outcomes. We study history to predict or change the future. That’s why computer scientist Alan Kay’s classic line still works: “The best way to predict the future is to invent it.” We do that through decisions and actions, sometimes by analysis, sometimes by instinct, usually by some messy combination of both. Add emotion, incentives, incomplete information, and changing conditions, and you get the reality that product people live in every day. (Well… everyone really.)

These are all bets we make… A political contest, a sporting event, a company roadmap, a new product launch. They’re all bets of different kinds. We all make probabilistic bets when we look at the weather and decide whether to bring an umbrella.

If so much of marketing and product management is already about making bets under uncertainty, it’s reasonable to ask whether prediction markets can help us make better ones.

What a Prediction Market Actually Is

A prediction market is a market where people trade on the likelihood of a future event. The price becomes a rough signal of collective probability. Let’s try a simple model. If a contract tied to a yes bet is that the contract pays $1 if the event happens and $0 if it does not, then a market price of $0.70 implies probability of about 70%, or exactly so assuming a perfect and liquid market. For example, “Feature X launches by June 30” is trading at 70 cents, the market is effectively saying there is about a 70% chance that happens. If you were playing for money, you’d pay $0.70 per contract. If you buy that contract for $0.70 and the event happens, you receive $1 at settlement, so your profit is $0.30 per contract. (That works out to a return of about 42.86 percent on the money you risked; the math is return = profit / risk, or $0.30 / $0.70) If the event does not happen, the contract settles at $0, so you lose the $0.70 you paid. In that sense, the market price is doing two things at once. It suggests the crowd’s rough probability estimate, and it determines the upside and downside of the bet. In practice, the market price can move over time as traders react to new information, so different people may buy or sell the same contract at different implied probabilities. (Just note one nuance: If traded on an exchange the price is not just abstractly “the market’s belief.” It is the current tradable price available in the order book, which may be affected by liquidity and order flow at that moment.) Also, read this: The Price Is Not the Probability: Why Everything You Think You Know About Reading Prediction Markets Is Wrong

This does not mean the market is right. It means it has produced a live probability estimate based on the information, incentives, and biases of the participants. That alone makes it interesting.

There is also research suggesting play money can sometimes work nearly as well as real money, though results seem mixed. I haven’t been able to find anything conclusive. Risk changes behavior, and not everyone is playing the same game even when it looks like they are. Any use of these tools should account for incentives, risk tolerance, and basic game theory.

Planning Poker Is Sort Of In This Space

Product and engineering teams already have one familiar tool for surfacing uncertainty. Have you ever used planning poker? The first estimate in the room is rarely the whole truth. Different people see different dependencies, hidden work, technical risk, and ambiguity. Planning poker helps drag some of that into the open. It is not quite Wisdom of Crowds, but maybe something like a confluence of familiar experts. (Kind of like the Delphi Method.)

Planning poker and prediction markets do share some traits. Both expose distributed knowledge. Both can reveal that what looked obvious was not obvious at all. Both can help a team move past the loudest voice or highest-ranking person declaring reality. But they’re not the same.

Planning poker is usually mostly about estimating effort, complexity, or relative size. It is an internal exercise. A prediction market is about the likelihood of a future outcome, though of course can also be an internal exercise. Will this ship on time? Will adoption cross a threshold? Will this experiment actually move the number we say it will? One is mainly about sizing the work. The other is about pricing the odds.

That difference matters. Product teams often confuse desire, confidence, and probability. Planning poker can expose disagreement about effort. Prediction markets, at least in theory, can expose disagreement about outcomes.

A Short History

Prediction markets are not new. Betting on uncertain outcomes is ancient. And obvious question, “Isn’t this really just the same as sports betting?” Maybe sort of. But not quite. A prediction market is broader. It can cover politics, economics, weather, product launches, court rulings, or almost any clearly resolvable event. The contract structure is also often cleaner in prediction markets. Another difference is the stated purpose. Prediction markets are often talked about as information aggregation tools. The pitch is not just entertainment, but that market prices may reveal collective beliefs about likely outcomes. Sportsbooks are mostly framed as gambling products, even if the odds also contain information. So ok, maybe the line between these is thin, but the purpose is clearly different. More formal prediction has been used in economics and forecasting for decades, and companies have experimented with internal markets for sales, hiring, project completion, and demand forecasting. Research has documented use at Google, Ford, Hewlett-Packard, Intel, Nokia, Siemens, Microsoft, and others, though it never became mainstream enterprise practice. (See Corporate Prediction Markets: Evidence from Google, Ford, and Firm X.) Google used internal markets to forecast OKRs and milestones. Ford used them for sales forecasting and to test the likely success of product feature decisions.

So no, this is not a new idea. What feels newer is asking whether prediction markets have real value for business questions and product management. Amid the deafening noise around AI, the topic may be unlikely to grab much mindshare right now. I have not yet used this in a formal PM setting, so I’m not trying to sell it here. I’m just exploring the idea out of interest and wondering whether it resonates, or whether people who have tried it see issues I’ve not found yet.

Predications Are Not the Same as Feature Voting

Customer service surfaces requests. Feature portals ask people what they want. Prediction markets ask what people think will happen. Those are not the same question. Voting is preference. Prediction is expectation. Preference can influence prediction, of course, and some argue incentives like money or prizes improve results.

Customers may want a feature that almost nobody will use. Internal stakeholders may like a roadmap item that has low odds of success or shipping on time. A prediction market is not to tell you what is right. It’s to aggregate belief about outcomes. That distinction might make prediction markets more interesting than ordinary suggestion boxes. It also makes them more risky if people confuse desire with probability.

Where This Might Help PMs?

Prediction markets are not magical idea machines. Though it seems they’d be more useful when the question is specific, observable, and resolvable vs. more open ended. For product teams, that could include questions like:

- Will this launch happen by date X?

- Will adoption cross threshold Y within 90 days?

- Will churn in segment Z improve after release?

- Will the experiment beat control by enough to matter?

- Will the feature actually move the KPI the team claims it will?

Or for external customers things like:

- Will at least 25% of surveyed target customers say they would pay for this premium tier?

- Will at least 30% of current customers say feature X would materially increase their usage?

- Will at least 15% of target prospects say they would switch from a competitor if this feature existed?

This isn’t a replacement for existing analytics, surveys, interviews, or experiments, but another input. Google Cloud has even framed prediction markets as useful where there is not enough historical data for machine learning alone, and as something that can be combined with ML rather than treated as a substitute. Here’s their guideline. Deloitte made a similar point from a strategy angle, arguing that internal prediction markets can surface signals that do not show up cleanly in traditional planning or in any single team’s view of the world.

This all feels directionally right to me. Product teams often do not lack opinions. However, they may lack clean mechanisms for exposing over-confidence, disagreement, and hidden information.

Is Anyone Doing This Now?

Yes, but not at a level that would make product leaders call it standard practice. There is solid evidence of internal corporate use, as mentioned earlier. More recently, most of the energy seems to be in public prediction markets such as Polymarket and Kalshi, where the focus is politics, finance, sports, geopolitics, and public events rather than internal product questions. At the same time, some consultants and strategists still use internal-style markets to surface uncertainty and non-obvious signals.

As of this writing, Polymarket’s main international platform blocks U.S. users, while Polymarket US is being rolled out separately under QCX LLC d/b/a Polymarket US, a CFTC-regulated Designated Contract Market. Kalshi can serve U.S. users because it already operates in that same regulated framework. Of course, access is only one issue. Taxes, reporting obligations, and local legal restrictions still apply. These platforms are not product-specific tools, but they can offer signals on broader market trends or consumer sentiment. And even when you can’t trade, you can usually still observe activity.

What’s Holding These Tools Back?

The barriers are clear enough. Prediction markets require participation, incentives, trust, and cultural buy-in. You need enough people with relevant information, willing to engage honestly, on questions written clearly enough to resolve. That is asking a lot from most organizations or customers.

Then there’s the politics. Teams may resist market questions that expose low confidence in a launch. Executives may not enjoy seeing live prices suggest that a cherished initiative has a 32% chance of landing on time. And employees may trade strategically, socially, or defensively rather than truthfully.

Research on corporate markets found that they can be useful, but also that they are not immune to optimism bias, overreaction, and internal strategic behavior. That sounds familiar because we can’t escape organizational psychology just because we put numbers on a screen.

What a Good PM Prediction Tool Would Need

This part is opinion. It’s based on research looking into this, not real world experimentation yet.

A useful product-oriented prediction market would need clearly written questions, a known resolution source, a defined time window, and incentives that reward being right rather than simply being loud. It would also need guardrails on who participates and on which questions belong there.

Not every product question should be marketized. Some are too fuzzy. Some are too political. Some are too easily gamed. And some are better answered by experiments, customer research, or direct operational data.

On the Dark Side

This is where things can get ugly, at least in a few cases. How or why someone might game simple corporate attempts at discovery is another question, though sharp elbows with ethically questionable competition comes to mind. Look at how SEO gets implemented sometimes. Or the outright cesspool of fraud that’s part of programmatic advertising.

One recent example of manipulation was especially odd. In March 2026, Times of Israel military correspondent Emanuel Fabian wrote that Polymarket bettors sent him death threats in an effort to pressure him to alter reporting tied to a market about whether Iran had struck Israel on a given day during the 2026 conflict involving the U.S., Israel, and Iran. The broader concern is not just that people bet on terrible things, but that money can create incentives to influence how events are recorded or resolved. The Guardian has reported similar pressure on outside information sources tied to market resolution. If you look at these companies, you might think, “How did they not see this coming and account for it?” But I can also see how normal people miss this. Even when considering managing the darker side of things, it can be hard to imagine such “out there” behavior. These problems will likely be mitigated over time, just as other online products have had to adapt to uglier parts of society.

This kind of behavior would hopefully matter less in straightforward product research. I mention it only because leaving it out would leave an incomplete story. Once real money and public attention are involved, some participants will not just try to predict the event. They will try to shape it, shape the narrative, or tamper with the source of truth.

This isn’t a small design problem. It’s a structural problem. And maybe it’s a reason why the cost / benefit might not make sense for marketing research use just yet give quality or risk issues. Though one would think, (or hope at least), most of us would have less incendiary questions.

AI, Oracles, and the Resolution Problem

Prediction markets need resolvers. In the crypto space, especially for onchain prediction markets and tokenized real-world assets, they rely on Oracles (systems that bring outside data or event outcomes onto a blockchain so contracts can react to something that happened in the real world) More generally, they need some accepted source of truth to determine what happened. That sounds simple, but it’s not.

If the resolution source is weak, delayed, manipulated, ambiguous, or attackable, the market becomes vulnerable. That is one reason to be careful about casual enthusiasm for AI as resolver, referee, or truth engine. AI can summarize, classify, and assist. But if you elevate it too far into canonical truth-setting, you create an attractive failure point. Remember where these systems get their information, especially current information. Think about SEO and the growing cottage industry around AEO, answer engine optimization, or whatever this week’s term is. It is another arms race, and the bad guys are in it too.

What this really comes down to is that ground truth is hard. We have moved from a few trusted news sources to a cacophony of noise, which in turn requires an identity and reputational framework we still have not solved. Ironically, in some specialized areas we have the opposite problem: very few sources and vendors to verify against, such as weather data as one example.

And unlike ordinary software bugs, resolution failures can directly move money, incentives, and behavior. So yes, prediction markets need resolution systems. But that may be the least glamorous and most important part of the whole design.

Wrapping Up

I will finish with a truth that feels wholly unsatisfying, but probably more honest than the alternatives.

On the pessimist side, the closest we are going to come to getting this right if we try it for product purposes is somewhere between 1% and 99%. However, on the optimist side, with sensible design and testing, perhaps we can get things 90% right 90% of the time. Assuming we can even agree on what “right” means in some of these cases.

I have a sense that these tools will grow into a next thing. Because we’re always looking for next things. Though I don’t think prediction markets can be a full replacement for product judgment. They are not a shortcut around customer research. They’re not a substitute for experimentation. They’re one more tool for dealing with uncertainty, and like most tools, they can be helpful, theatrical, or dangerous depending on how they are used.

For product management, that may be the most useful framing. Not certainty. But maybe better odds, better visibility into confidence?

And maybe that is enough to move some needles.

Bonus Items: A couple of Prediction Market Helper Tools

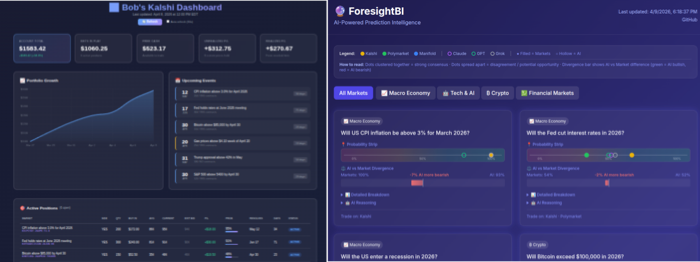

While playing with these tools, I built a couple of small helper apps; one a simple tracking dashboard for Kalshi, and another that allows seeking divergence of opinions across multiple markets and AIs to try to come up with a composite score and deviations. These are not specific to marketing or products per se, though may offer some large scale directional info on macroeconomic issues. These were built, (of course), with assistance from my OpenClaw bot. I’ve tested them on two different systems; Linux/Ubuntu and Mac. Use at your own risk.

See Also:

- Corporate Prediction Markets: Evidence from Google, Ford, and Firm X

- Edge Master Class 2015: A Short Course in Superforecasting

- Good Judgment

- Manifold markets

- 3 ways internal prediction markets can surface strategic signals amid data noise (Deloitte)

- Top Market Research Companies Using Prediction Markets

- Hypermind

- Cultivate Labs