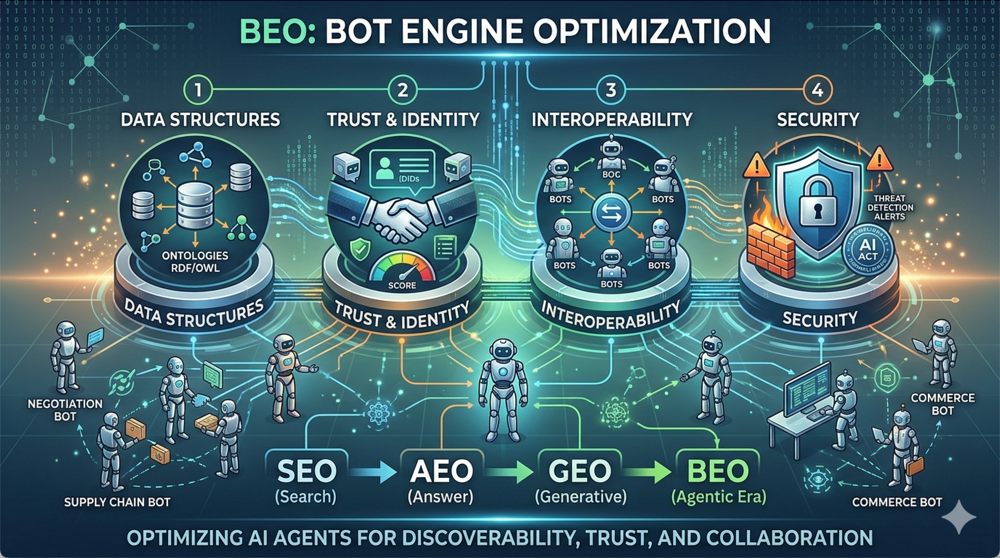

Most people discussing agent optimization are talking about basic visibility or commerce mechanics. I’d like to go deeper: data structures, trust, identity, interoperability, and security. I’m not sorry about trying to coin the term BEO. Except, as it turns out after a search, I’m maybe a month or so from not being first with this, (so close!). Though I’d like to extend the concept anyway, based on my own experience building and what I’ve been seeing.

We shouldn’t use AEO, for “agent”, because that’s taken. We need something new because as we all know if there’s no acronym, it really can’t be a technology. And wouldn’t it be nice to have unambiguous names? I mean, “DaaS” can apparently be “Data as a Service” or “Desktop as a Service” and we have plenty of other ambiguous mess. We should try to do better with our naming. Why Bot Engines now? We’ve trudged through the cave-dwelling ancient history of Search Engine Optimization (SEO), evolved into Answer Engine Optimization (AEO), and now we’re managing Generative Engine Optimization (GEO). So, naturally, we need something fresh. I’d like to try out BEO for the win as an idea. It’s the art and science of tuning bots, agents, and autonomous systems to thrive in interconnected digital ecosystems; optimizing them not just for performance, but for discoverability, interoperability, and trustworthiness in a world where machines negotiate, transact, and collaborate on our behalf.

It’s SEO, (though much more), for the agentic era. Just as websites compete for search visibility, bots will need to stand out in marketplaces, integrate with protocols, and build reputations.

What Do We Have So Far?

Bots Themselves: You likely know this part… AI agents are proliferating. They’re software entities acting autonomously, from simple customer-service chatbots to managing supply chains. Tools like LangChain, AutoGPT, and frameworks from OpenAI and Anthropic make building them easier. They can be proactive, learning and adapting, with tool collections for tasks beyond their core skills. Kind of like a general contractor calling specialists when needed (assuming they recognize the need and the tools exist). It seemed like the difference between bot and agent used to be bots were more about automation and task completion, whereas agents were more generally goal-oriented and can plan, decide steps and more. That seems to be how people have broken this down in the past. However, if there was ever really a line at all, that seems to have blurred.

Interaction Protocols: Standards are emerging for how bots communicate: protocols like the Open Agent Protocol or decentralized blockchain-based ones for secure handshakes. Early APIs enable agent-to-agent dialogue like querying data, proposing transactions, or collaborating on tasks. It’s like an enterprise service bus, but with built-in negotiation and verification layers, though still in early development.

Marketplaces Beginnings: Platforms are sprouting up where bots can be discovered, rated, and deployed. Think “App Store” for agents, but scaled to full agents. Or decentralized marketplaces on blockchain networks where agents list services for micropayments. Early examples include agent hubs on Solana or Ethereum-based DAOs for AI services. (TARS, AI Agents) These are nascent, but gaining traction as agentic commerce and more become viable. (I’ll touch on this more soon, but I have to throw in a warning now. Bot marketplaces seem more dangerous right now than free software sites ever were. This might partly be due to super fast adoption of this new tech, especially among those utterly giddy about it, but perhaps with relatively little actual developer skill or experience. It was one thing in the 1990s or early 2000s to install some software that might add some advertising spam toolbar to your browser. It’s quite another to install things that can somewhat intelligently pick through your computer, transfer your crypto, and so on. We all have to be careful out there.)

External tools for bots: These will be essential extensions. Agents increasingly rely on function-calling to invoke calculators, various tools, databases, or third-party APIs. These tools expand capabilities but introduce new optimization needs: reliable tool discovery, error handling, and protocols to prevent misuse or cascading failures. Without standardized tool registries or metadata schemas, interoperability suffers, forcing custom integrations that fragment the ecosystem further.

Payment rails: This critical layer is getting tailored for bots. Traditional human-oriented systems (credit cards, PayPal) carry high friction, fees, and UX hurdles unsuitable for machine-to-machine micropayments. Crypto infrastructure for speed or low-cost programmable transfers are lining up quickly. And Stablecoins – theoretically – enable value exchange without volatility, but adoption hinges on verifiable settlement, dispute mechanisms, and integration with reputation systems to mitigate fraud. Regardless of speed bumps, this will happen fast. Crypto is finally finding use cases of actual value. Right now is perfect for the “See? I told ya'” crowd.

This foundation is still incomplete. Agentic commerce promises efficiency. Yet without a fuller ecosystem, we’re still facing some turbulence.

What’s Missing?

Where are remaining gaps in agentic world? Here’s several critical pieces still absent or underdeveloped.

Well-Defined Metadata Structures, Ontologies, and Taxonomies: Even mature systems like UPCs, ISBNs, and others suffer from real-world messiness; duplicates, drift, and poor cross-mapping. Bots need standardized descriptions of capabilities and data for reliable understanding. Without robust ontologies, semantic interoperability fails. Industry often outpaces standards bodies, likely yielding proprietary patchworks. Ideally we’ll coalesce in this area quickly.

Object Identity Verification and Reputation Tools: Object identity verification and reputation tools are also problematic. Decentralized approaches like Decentralized IDs, (DIDs), or Web3 wallets promise autonomy but stumble at human-machine trust boundaries. A hybrid model is needed: decentralized ledgers anchored to verifiable real-world roots (government IDs, biometrics) and managed by industry consortia. Without this, confirming a bot’s identity is unreliable. Reputation is more challenging. Existing ratings or oracles poorly handle disputes, false claims, or errors. We’re still missing solutions for things like AI anomaly detection, multi-factor scoring (on-chain / off-chain), and scalable dispute resolution. These aren’t just technical issues; they’re existential risks that could erode trust and stall the entire agentic ecosystem.

What Can or Should We Do in the Meantime?

Our best bet is to wait for standards to catch up. Yes, obviously that was a bad joke. No one waits, which is why tagging standards are already messy. In fairness, often the learning is in the doing. We’ll pay for it somewhat with industry-wide tech debt. But we’ve played this game before. If you look back at early microformats it’s clear we collectively never really got that right; my opinion anyway. So everyone went and did their own thing. For the super large, they ran into special problems. There’s reasons Amazon created ASINs to run one unified product catalog across millions of items and sellers; and often sellers of the same item. Without ASINs, it would be much harder to merge offers, manage listings, support search, and keep product pages organized at Amazon scale. This probably solved other issues as well. Now? Retailers optimizing for just Google or Amazon likely isn’t enough. And we’re not just talking about products when it comes to bot world anyway. Proactive steps are still a sensible path. BEO can begin by optimizing data and systems today since we can already see tomorrow’s agentic reality. LLMs and generative tools are already exposing decades of data slop that was once ignorable. Bots will do this as well at even greater scale over time.

Get Your Data Ready: Clean data will be even more critical than it’s been for whatever form agentic systems take. Invest in faceted metadata, tagging with multiple attributes, or whatever shape is needed. Build or adopt intentional ontologies and taxonomies to classify assets. Use NLP tools to extract classifications from unstructured data. Audit and fix misused fields like overloaded mis-named columns full of disparate data. These steps may map directly to future standards or at least provide a migration head start. I’ve seen projects derailed when legacy overloaded fields (used by reps for notes) blocked clean product data exposure on e-commerce sites requiring major data review first.

Time for Spring Cleaning: Is it digital declutter time? Inventory your APIs, data silos, and bot prototypes. Ensure they’re trying schemas that bots can parse. Can your bot handshake with a simulated agent network? Tools like Schema.org or JSON-LD can help structure data semantically. Watch for new standards in these areas. The payoff? Bots optimized with rich, clean metadata will be more discoverable in marketplaces, negotiate better, and integrate faster.

BEO Strategies

We can prepare now. Much of what follows is informed speculation based on current trajectories. (Ok, yeah, that’s a fancy way of saying they’re my guesstimates.) Consider them directions worth considering even if not perfectly clear yet. As agentic, bots won’t browse like humans; they’ll query, negotiate programmatically, and transact autonomously. Platforms must become bot-friendly and may deploy their own agents to drive sales. Key areas include the following:

Structure Product Data for Bot Readability: Go beyond SEO by implementing rich, machine-readable schemas in emerging agent formats. Include not just price and description, but dynamic attributes like real-time inventory, shipping estimates, and compatibility specs. Leverage e-commerce ontologies where available so bots can parse and compare offerings instantly. Augment tools like Google Merchant Center with agent-specific endpoints. Early efforts may struggle with complex realities like trait- or price-dependent SKUs; issues early e-commerce faced due to dependency and UX challenges. Current feeds/APIs often don’t fully expose dynamic dependencies in a machine-readable way. This is truly ironic for some of us that worked on earlier ecommerce efforts to see these same issues coming back to haunt yet again. At least this time, there should be clearer roadmaps given already hard won lessons.

Integrate with Agentic Marketplaces and Protocols: Build interfaces for emerging bot marketplaces, starting with basic features. Assume early implementations will be buggy and risky. Expose APIs allowing customer agents to query stock, negotiate discounts, or bundle products autonomously, but isolate these from core systems to limit damage from malicious or runaway bots. Integrate blockchain-based rails for micropayments to enable low-friction micro-transactions (e.g., for personalized recommendations). Treat it as another payment method, though returns and fraud vectors will likely introduce new challenges. (I haven’t personally handled a bot payments project yet. But my understanding is right now anyone dabbling still has hybrid or human intervention going on. This is a space that will need the usual logistics issues; what are return rules, how are refunds approved, the whole thing. Unless someone can show me otherwise, this is still almost nowhere yet.)

Build Trust and Reputation Signals: Bots will increasingly check a variety of seller bona fides. Invest in identity verification for brand and products, linking to blockchain attestations or third-party audits if good ones exist for your industry. Secure verified credentials from emerging vendors or organizations, though industry consortia for certifications remain limited. Develop reputation APIs when they become reliable for on-chain reviews. For fraud prevention, seek to filter malicious bots and restrict sensitive data access to trusted agents only. There have been proposals and attempts to manage reputation over the years. None have been widely adopted as yet.

Leverage Personalization and Dynamic Campaigns: Look into testing your marketing bots to interact directly with customer agents, or new prototypes. It might be possible to proactively offer deals based on inferred preferences (e.g., signaling interest in eco-friendly products when or if such signals become available). Perhaps use AI to generate personalized variants or offers that agents can evaluate. This bot-to-bot go-to-market approach will take time to mature, as the current handshake mechanics between agents remain in flux.

Fortify Security and Compliance: With bots handling transactions, vulnerabilities might multiply rapidly. Adopt zero-trust architectures, including rate limiting and anomaly detection tailored to bot traffic. Watch for evolving regulations like the EU AI Act. Test scenarios. Simulate agent swarms to stress-test. Protecting your liability exposure could be as critical as any competitive moat. Use Agent Eval frameworks of some sort.

How Long Have you Got?

Worlds are colliding. Crypto began with lofty ideals: self-sovereign finance, decentralized governance. All still admirable. Yet its practical dream of seamless micropayments failed for humans due to high friction, poor UX, and misaligned incentives. That’s changing. Not because people suddenly embrace crypto wallets, but because agents ignore UX friction entirely. They demand programmable, fast, low-cost transaction rails for machine-speed, high-volume commerce. Crypto’s true killer app may prove to be agent-to-agent value exchange, barely involving humans at all.

So how long do we have? Hard to say. The pace of change feels extreme as ever. It’s reasonable to assert that preparation time is now. Cleaning data delivers value regardless, positioning you better for whatever form this ecosystem takes. Those who instrument APIs for agent consumption, build reputation signals, and prioritize agent-facing discoverability will gain real advantages. Maybe not in a gold-rush land-grab sense, but because technical debt and data debt compound relentlessly anyway, so you can protect against downside while taking what increasingly seem to be the right steps.

Danger Will Robinson!

Fraud and danger have accelerated into these marketplaces as fast as the technology itself. In February 2026, ClawHavoc inflicted damage by poisoning nearly a thousand skills across agent platforms. The risk surface is immense and expanding faster than humans can monitor. It’s like straight out of William Gibson’s Neuromancer or the Wachowskis’ Matrix films. We’re all in for a ride, like it or not. There’s no escaping it: bury your gold, stock guns, ammo, rice, and beans if you want, but we’re all digitally dependent now. Supply chains, finance, communications, identity, commerce, government, healthcare, education, logistics… everything is tied to software. As one observer put it, “Every company is digital now; they just don’t all know it yet.” Even small, old-school contractors rely on digital tools. You can reduce exposure with reserves and analog fallbacks, but very few are truly outside the system anymore.

Final Words: Security Has to Get More Serious

Security must get far more serious. I sometimes want to yell at the LinkedIn hype around “vibecoding” and speed-to-market. Yes, fast prototypes and iteration have their place, and speed matters. But if a few days, weeks, or months truly determine success, your position probably isn’t strategically defensible anyway. In those cases, maybe accept the risk and buy more insurance, because you’re almost certainly piling up liability with tech debt.

When building with agents, security can no longer be an afterthought. The vibecoding circus will continue. It’s too seductive not to. Yet the moment anything touches production, money, customer data, critical internal systems, or meaningful real-world actions, especially mission- or safety-critical ones, adult engineering is required. At minimum, serious agentic systems demand tighter code and architecture reviews, narrowly scoped permissions, strong isolation, comprehensive monitoring and logging, audit trails, rollback capabilities, spend controls, tool-use restrictions, and human-in-the-loop gates wherever consequences are material.

I don’t enjoy sounding anti-innovation. I’ve been close to the bleeding edge my entire career. Still, this is what responsible engineering looks like, especially with such a vastly expanded threat surface.

Personally, I tested out a Clawbot and boxed him for awhile. But, there are tasks I want him to do. So he’s freer now. He’s on his own box. He has his own crypto wallet with limits. And he’s limited in access to accounts or APIs, except for his defined task set. He’s not part of even our home network, except just for WiFi access. As well, his mind has token limits so worst case, he would burn those and not be able to replenish. That would, (or at least should), stop anything rampant. Unless… unless he starts spending some of his “own” money. Still, he’s instructed not to. And says he won’t. Children do fib a bit sometimes though. I’ll have to keep an eye on him. The more serious takeaway is that agentic capability without disciplined controls is not so much innovation as it might be exposure. Bot Engine Optimization (BEO) should include proper governance as well as core business goal achievements. Oh, one last thing… if you do crypto enable a bot I’d strongly suggest you get it an entirely fresh wallet with it’s own seed phrases; not just an account from an existing wallet. Your helper will need your private key to sign transactions. (Which probably makes you liable by the way.) So best to keep that all completely separate from anything else. And fund it from another crypto account, not direct rails from TradFi. Isolate it. Your bot is living in the ‘verse, the Sprawl, the metaverse… whatever you want to call the space, consider it a hostile place.

As I have before, I once again return to my favorite William Gibson quote: “The future is already here – it’s just not very evenly distributed.”

Have at it. Enjoy. Be intentional. And ready to adapt.

See Also:

- Google: A2A: A New Era of Agent Interoperability

- IBM: What are AI agent protocols?

- Open Protocols for Agent Interoperability Part 1: Inter-Agent Communication on MCP

- W3C: RDF; W3C: OWL

- BCG: Agentic Commerce Is Redefining Retail. Here’s How to Respond.

- As ‘agentic commerce’ gains ground, companies shouldn’t put too much faith in ‘GEO’

- Agentic SEO: Engineering Your Site for Autonomous AI Agents & Machine Buyers

- x402 on Stellar: unlocking payments for the new agent economy

- How AI Agents Are Changing E-Commerce in 2026: Open Protocols Explained

- 7 AI Trends Shaping Agentic Commerce in 2026